What is the best method to centralize data collection and/or history metadata related to SQL Server Instances? This is a common question that a lot of companies/developers ask themselves. A lot of solutions, especially pre SQL Server 2008, require objects to exist on each participating instance, or require that you create a batch process that loops through a text file of server names. SQL Server 2008 introduced a centralized storage mechanism to collect and manage performance data for 1 or more instances, aliased as Data Management Warehouse. Here is a great link that briefly covers the new 2008 feature, http://www.simple-talk.com/sql/learn-sql-server/sql-server-2008-performance-data-collector/. Today I will be focusing on a highly scalable SSIS solution. SSIS offers a lot of flexibility, scalability and has a lot of different security measures, that are not available to TSQL and command line programming. For those unfamiliar with SSIS, SSIS (SQL Server Integration Services) is a tool used to transform and load data amongst a variety of sources including SQL Server, flat files, Oracle, AS400, MS Access etc. In a recent blog post, I demonstrated how to setup a new SSIS package and how to dynamically change the destination file name. This post has a lot of meat for beginners and may be worth a read, http://jahaines.blogspot.com/2009/07/ssis-dynamically-naming-destination.html. I will not get into the particulars of creating the SSIS package here, but will recommend that any persons not familiar with SSIS to read the above linked post.

Let’s get started by opening SSMS and browsing to the SQL Server instance you want to use as your central data store. I chose to create a new database called “SQLMngt_DW”. The ultimate plan for this database is to store job history metadata for all SQL Server Instances. Feel free to create your database where you see fit.

USE [master]GOCREATE DATABASE [SQLMngt_DW]

GO

Now lets create a table to store server names.

USE [SQLMngt_DW]GOIF EXISTS(SELECT 1 FROM sys.tables WHERE NAME = 'Servers')

BEGINDROP TABLE dbo.[Servers];

ENDGO--Create a Servers table to house all your instances

CREATE TABLE dbo.Servers(

InstanceId INT IDENTITY(1,1) PRIMARY KEY CLUSTERED,

InstanceName VARCHAR(50));

GO

Now we can insert some ServerNames into our dbo.Servers table.

USE [SQLMngt_DW]GOINSERT INTO dbo.Servers VALUES ('Instance1\dev');

INSERT INTO dbo.Servers VALUES ('Instance2\dev');

**Note: Make sure to insert some instance names into the dbo.Servers table; otherwise, your package will timeout when trying to dynamically change the connection string. Also, make sure you enter valid instance names. The names above are dummy server names, you should alter the inserts for you environment.

We need to create on last table, called JobHistory. This table will store job history data for all servers listed in the servers table.

IF EXISTS(SELECT 1 FROM sys.tables WHERE NAME = 'JobHistory')

BEGINDROP TABLE dbo.[JobHistory];

ENDGO--Create a job history table

CREATE TABLE dbo.JobHistory(

JobHistoryId INT IDENTITY(1,1) PRIMARY KEY CLUSTERED,

ServerName VARCHAR(50),JobId UNIQUEIDENTIFIER,

JobName VARCHAR(50),RunDate DATETIME,

JobStatus VARCHAR(11),Duration VARCHAR(10))

Now that we have our database and tables, we can start creating our package. Create a new SSIS package and create two variables. One variable will need to be of object type and the other of string, as shown below. The value for the string variable, which I named “connectionString” should have a value equal to the server name where you placed your centralized database.

Next drag the Execute SQL Task component to the canvas. Your canvas should look like below.

Open the Execute SQL Task and configure the general tab, as shown below. Make sure to create a connection to the instance where you created your database and JobHistory table. I named my connection SQLMngt_DW.

The code for the SQL Statement is below.

select InstanceName from dbo.Servers

Now, we need to configure the result set tab. Make the appropriate configurations, as shown below.

Our SQL Task is fully configured. We can proceed in creating the ForEach task. Drag the ForEachLoop Container to the canvas. Make sure to create a precedence constraint between the SQL Task and the loop. Essentially, all you need to do is drag the green arrow from the SQL task to the ForEachLoop Container. Once the loop container is in place, you have to drag a data flow task into the container. Your canvas should look like the screenshot below.

Now we have to configure both controls. Let’s start with the loop. Double-click the foreachloop container to configure the properties. I will start with the collection tab.

Next configure the variable mappings tab, as shown below.

Now, we have to configure the Data Flow Task. Create both a source and destination OLE DB task, within the Data Flow Task, and create a precedence constraint between the two. Once the source and destination have been created, you should create a new OLEDB connection. This connection string needs to point to any valid Instance. Note: It does not matter which instance you choose. I have chosen to point my new connection to the same server as the SQLMngt_DW. I named my new connection dyn_source (dynamic source). Your canvas should look like below.

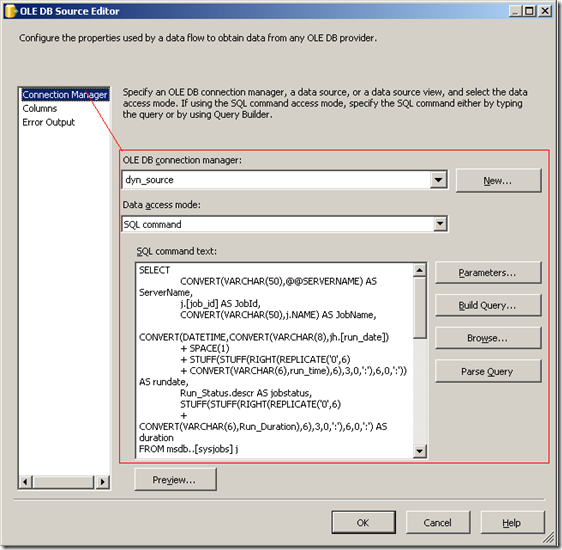

Now open the OLE DB Source, in the data flow task and configure it, as shown below.

Note: The code for the SQL Command is below the screenshot.

SQL Command Code:

SELECT CONVERT(VARCHAR(50),@@SERVERNAME) AS ServerName,

j.[job_id] AS JobId,CONVERT(VARCHAR(50),j.NAME) AS JobName,

CONVERT(DATETIME,CONVERT(VARCHAR(8),jh.[run_date])

+ SPACE(1) + STUFF(STUFF(RIGHT(REPLICATE('0',6)

+ CONVERT(VARCHAR(6),run_time),6),3,0,':'),6,0,':')) AS rundate,

Run_Status.descr AS jobstatus,STUFF(STUFF(RIGHT(REPLICATE('0',6)

+ CONVERT(VARCHAR(6),Run_Duration),6),3,0,':'),6,0,':') AS duration

FROM msdb..[sysjobs] jINNER JOIN msdb..[sysjobschedules] jsch

ON j.[job_id] = jsch.[job_id]INNER JOIN msdb..[sysschedules] sch

ON jsch.[schedule_id] = sch.[schedule_id]INNER JOIN msdb..[sysjobhistory] jh

ON jh.[job_id] = j.[job_id]INNER JOIN(

SELECT 0 AS run_status ,'Failed' AS descr UNION ALL

SELECT 1,'Succeeded' UNION ALL

SELECT 2,'Retry' UNION ALL

SELECT 3,'Canceled' UNION ALL

SELECT 4,'In progress'

) AS Run_Status ON jh.[run_status] = Run_Status.[run_status]WHEREj.NAME NOT LIKE 'Tivoli%' --tivoli backups

AND j.[enabled] = 1 AND sch.[enabled] = 1AND jh.[step_name] = '(Job outcome)'

AND CONVERT(DATETIME,CONVERT(VARCHAR(8),jh.[run_date])

+ SPACE(1) + STUFF(STUFF(RIGHT(REPLICATE('0',6)

+ CONVERT(VARCHAR(6),run_time),6),3,0,':'),6,0,':'))

BETWEEN DATEADD(DAY,DATEDIFF(DAY,0,GETDATE()),0)

AND DATEADD(DAY,DATEDIFF(DAY,0,GETDATE())+1,0)

Make sure to click the columns tab so SSIS can automatically set your columns for you.

Now let’s configure the destination. The destination is actually quite simple. We have to open the properties and choose our SQLMngt_DW source and the JobHistory table, as shown below. Make sure to select the mappings tab to verify all the columns are aligning properly. You may see the JobHistoryId column is set to ignore, which is fine because this is an identity column and we do not want to insert directly into it.

Now we get to do the fun part :-), which is setting up the dynamic connection string via the connectionstring variable. Right-click the dyn_source connection string and click properties. Once the properties display, you can click the ellipses next to expressions and choose connectionstring. Click the ellipses in the expression textbox to open the expression editor and paste the following expression:

"Data Source=" + @[User::ConnectionString] + ";Initial Catalog=msdb

;Provider=SQLNCLI10.1;Integrated Security=SSPI"

Note: I am using Integrated security. If your connection string is use SQL Authentication, you will need to supply username and password in the connection string.

Believe it or not, that is it!!!! Click on the play sign at the top of the SSIS application to run the package. We should see all tasks light up green, unless something is not configured correctly, in which case you will see red.

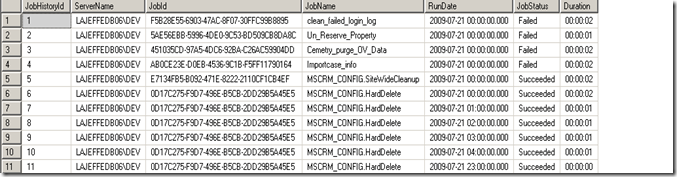

Our last step is to validate our package did what it is supposed to do. We can validate this by querying our job table.

There you have it. You now have a very flexible and scalable method to collect data from one or more sources. The SSIS method really beats having to manage a command line solution or having to manage/deploy stored procedures to each server. Configuring the SSIS package is not that tedious and can be done fairly quickly. It actually takes a lot longer to describe how to do this, than actually do it. Once you get use to using SSIS, this process should only take you 10-15 minutes to setup and configure. I hope that you have learned something new and I know this will help some of you out there. I know this post may seem overwhelming for those of you have not really had the opportunity to dive into SSIS yet, so I have provided a link, where you can download the project. Please be aware that I am using SQL 2008 and BIDS 2008, so my package will not work with BIDS 2005, as the package structure changed in BIDS 2008.

Download the project files: http://cid-6f041c9a994564d8.skydrive.live.com/self.aspx/.Public/SSIS%7C_Data%7C_Collection/SSIS%7C_DataCollection.zip

Happy coding!!

4 comments:

I believe you may also know about the fix Excel utility

how to remove error:

Error: 0xC004700C at Data Flow Task, SSIS.Pipeline: One or more component failed validation.

Error: 0xC0024107 at Data Flow Task: There were errors during task validation.

Warning: 0x80019002 at Foreach Loop Container: SSIS Warning Code DTS_W_MAXIMUMERRORCOUNTREACHED. The Execution method succeeded, but the number of errors raised (5) reached the maximum allowed (1); resulting in failure. This occurs when the number of errors reaches the number specified in MaximumErrorCount. Change the MaximumErrorCount or fix the errors.

Warning: 0x80019002 at Package: SSIS Warning Code DTS_W_MAXIMUMERRORCOUNTREACHED. The Execution method succeeded, but the number of errors raised (5) reached the maximum allowed (1); resulting in failure. This occurs when the number of errors reaches the number specified in MaximumErrorCount. Change the MaximumErrorCount or fix the errors.

SSIS package "Package.dtsx" finished: Failure.

how to remove error:

Error: 0xC004700C at Data Flow Task, SSIS.Pipeline: One or more component failed validation.

Error: 0xC0024107 at Data Flow Task: There were errors during task validation.

Warning: 0x80019002 at Foreach Loop Container: SSIS Warning Code DTS_W_MAXIMUMERRORCOUNTREACHED. The Execution method succeeded, but the number of errors raised (5) reached the maximum allowed (1); resulting in failure. This occurs when the number of errors reaches the number specified in MaximumErrorCount. Change the MaximumErrorCount or fix the errors.

Warning: 0x80019002 at Package: SSIS Warning Code DTS_W_MAXIMUMERRORCOUNTREACHED. The Execution method succeeded, but the number of errors raised (5) reached the maximum allowed (1); resulting in failure. This occurs when the number of errors reaches the number specified in MaximumErrorCount. Change the MaximumErrorCount or fix the errors.

SSIS package "Package.dtsx" finished: Failure.

I feel SSIS is the most useful and interesting tool to create dashboards and find the loopholes in a particular program.

SSIS Upsert

Post a Comment