This is part two of a two part series. In part 1 of this series I demonstrated the most popular methods used to parse/split a delimited string of values, http://jahaines.blogspot.com/2009/11/splitting-delimited-string-part-1.html. In this article I will be focusing on the performance implications of each method presented. I will start of by giving a disclaimer that your results may vary from the results presented in this article. Although the numbers may differ, the data trend should be somewhat consistent with my results. I will be tracking three key performance counters: CPU, Duration, and Reads against varying string sizes and data loads. In addition to a varying delimited strings length and load, I have performed each query 10 times and taken an average. I did this to ensure that I get the most accurate results. Enough talk let’s dive into our first test.

Note: I am not going to walk through how I did each test, but I will link all my scripts at the bottom of this post

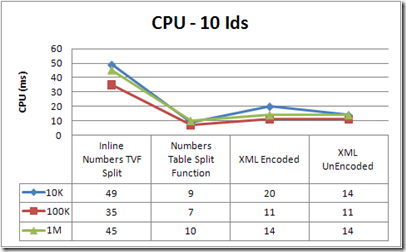

The first test is testing how CPU usage differs between methods. I tested a delimited string of exactly 10 Ids over a table with 10,000 rows, 100,000 rows and 1,000,000 rows. As you can see, the permanent numbers table TVF is by far the best solution. The CPU is highest on the inline Numbers TVF. The inline numbers table is the most expensive because SQL Server has to do a lot of processing and calculation on the fly, whereas the permanent numbers table mostly has to read data, which means the IO will be much higher. Both XML methods perform much better than the inline numbers table, but are nearly twice as slow as the permanent numbers TVF because they require more CPU intensive processing, which is derived from SQL converting the data to XML and transforming the XML back to a relational format. It takes more processing power to encode and decode the XML than to simply convert it, so you should only use the encode/decode method, if your data contains special XML characters, http://msdn.microsoft.com/en-us/library/aa226544(SQL.80).aspx.

Winner of Round 1: Numbers Table Split Function

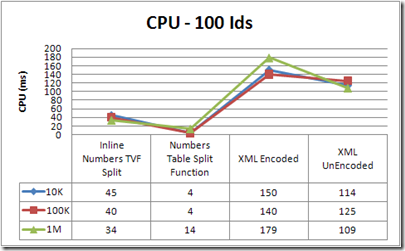

The next test was performed on a table with 10,000, 100,000 rows, 1,000,000 rows, using a string consisting of 100 Ids. If you look at the chart below, you will see a trend. As the number of Ids increases, so does the cost of the XML method. CPU usage actually increases at a exponential rate, which makes it the least scalable solution. Obviously the Numbers Table TVF is the clear winner here.

Winner of Round 2: Numbers Table Split Function

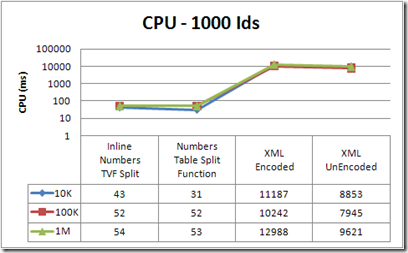

The final test was taken over the same load, but I supplied a string of 1000 Ids. As you can see with 1000, ids the results depict more of the same behavior. The big take away is the XML method should only be used when the number of values in the delimited string is relatively small.

Winner of Round 3: Numbers Table Split Function

The next counter I will be focusing on is Duration. Duration is not a very reliable counter, as it is very dependent on other processes running on the machine; however, it does provide insight to performance. The first test will be done over the same load. I will begin with 10 Ids again.

The results are a little more aligned in this test. The Numbers Table Split TVF is the best performing on average, followed by the XML methods. Again there is a higher performance cost to encode XML, so do so only when necessary. Duration does give you a general idea about performance, but these results definitely do not carry as much weight as the other counters.

Winner of Round 4: Numbers Table Split Function

The next step is to increase the number of Ids to 100. I wont repeat the same stuff over again I promise. This test yields more of the same.

Winner of Round 5: Numbers Table Split Function

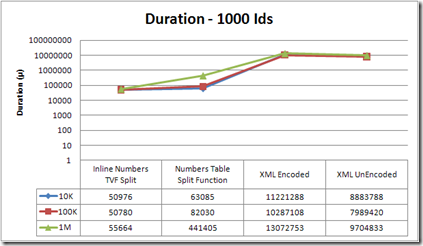

Next, I bump the number of Ids to 1000. Here we go….. a different result :) . In this example, the numbers table actually performed worse than the inline number TVF. You may be wondering why the duration is worse for the numbers table split function. I suspect the answer is the number of reads makes the query take longer to execute; however, there could have been something running on my machine when profiler captured the data. This is a prime example of why duration can be an unreliable counter. I cannot tell if the query is actually worse or if an environmental factor on my laptop may have skewed the timing. I will take the high road :^) and assume the reads impacted the timing because I had no known programs running. The XML results are just disturbing…… For as much as I recommend XML split solutions on the forums, these results are just scary.

Winner of Round 6: Inline Numbers TVF Split

The final counter I will be testing is reads. This is by far one of the most important counters because it impacts so many facets of SQL Server performance. Do not let the number of reads for the Numbers Table TVF persuade you to avoid it. A permanent numbers table TVF is going to have more reads. Essentially you are reading table values from cache/disk instead of calculating them, so the numbers of reads is greater. The obvious choice for this test is the inline numbers table TVF.

Winner of Round 7: Inline Numbers TVF Split

The next test increases the number of Ids to 100. As for the results, we see more of the same.

Winner of Round 8: Inline Numbers TVF Split

Finally, I will increase the number of Ids to 1000. Again more of the same. The number or reads, stays relatively consistent across all solutions. The XML solution does better than the numbers table TVF here, but it just does not scale well at any other level. I used to be a firm believer in the XML method, but I think I am going to start primarily recommending the number table TVF or an inline number table TVF.

Winner of Round 9: Inline Numbers TVF Split

The verdict

So what is the verdict? Well, again I cannot reiterate enough that no one solution is always better than another. Different solutions work best in different situations and environments. In my tests, there is a clear winner, the permanent numbers table TVF. Even though this is the “winner”, I have overwhelming evidence that says I should be using a numbers table to split a string, regardless of the numbers table being permanent or inline. I am happy with these results because the permanent numbers table split function performs well and is very easy to implement. Another benefit of the permanent numbers table TVF solution is that it works in all versions of SQL. Why would you not want to use the permanent numbers table? You may be willing to accept more CPU consumption to reduce IO, or perhaps you do not want to maintain another table. If this is the case, an inline numbers table solution is the way to go. All-in-all, the XML method really did surprise me and I find it sad that this method does not perform well. There is just not enough incentive to use an XML solution when an easier and better performing solution exists. Remember that you should test each method in your environment to see which works best in your environment.

I hope that you have learned something and can use this information to make more informed decisions when deciding to find a method to split delimited strings.

**** UPDATE *****

I did have some bugs in the code for the 1000 ids test. I guess my relaxed brain never came home from vacation. A special thanks goes out to Brad Schulz for spotting the bug. I am glad that the end result is for the most part the same. The only real deviation occurred in the reads category. The numbers of reads should be comparable for all methods because the same amount of data should be returned, but in my original result set they were not. I resolved the bug and have since updated the scripts and the results.

****UPDATE*****

A good friend and fellow SQL server enthusiast Brad Schulz recently posted a great entry, on parsing delimited strings with XML. His findings show how using the XML method in certain ways can cause the query to really bomb; however, you can avoid some performance penalties by casting and storing the delimited string in a XML data type, instead of casting and parsing the XML inline. I will not go into detail about why the inline XML is slower because I want you to read it right from the horse’s mouth, http://bradsruminations.blogspot.com/2009/12/delimited-string-tennis-anyone.html. When I changed my code to use a XML variable, the XML methods were just as performant as the numbers table methods. I do not know about you, but I am ecstatic. I for one love the XML method and am very excited to see that it is and can be just as performant, when used in the right context.

Download the script files: http://cid-6f041c9a994564d8.skydrive.live.com/self.aspx/.Public/Split%20Delimited%20String/BlogPost^_UnpackDelimitedString.zip

10 comments:

Nice Article.

I like the TVF over the physical TABLE simply because the idea of a numbers TABLE irks me. Same with a calendar TABLE. I haven't implemented either, because i haven't needed them (and i would, if i did). With this information, it looks like if i ever do it, the TVF will do the job.

Hi Adam...

Thanks for all the research.

I want to make sure I understand... In your first article in the series, you JOINed a 10,000-row table with a variable that contained a list of N comma-separated values.

Is that what you did in this exercise? In other words, JOINed a 10,000-row (or 100,000-row or 1million-row) table with a variable that contained a list of 10 or 100 or 1000 items?

Or did you have a table (of 10K or 100K or 1M rows) that CONTAINED a column that consisted of a comma-separated list that you expanded for each row?

The reason I ask is because I would think that the first scenario would only perform the split one time (on the one variable), creating a virtual table of 10 or 100 or 1000 rows to be JOINed, whereas the second scenario would expand the 10K or 100K or 1M table into a maximum of 10K*1000 or 100K*1000 or 100million rows (and would therefore perform the split function up to a million times).

--Brad

Hi Brad.

Thanks for the feed back. In these tests, I built a string of 10/100/1000 Ids and put the value into a table. I then set a local variable to the Ids string and directly joined it to the table. The tests are equivelent to the code I used in part one of the series.

Essentially I did this before each execution.

set @var = (select col from table)

then do the code to split

Hi Adam...

It's possible that your results for the Numbers Table functions may actually be too LOW.

I downloaded your scripts, and I created the @ID variable with 1000 numbers in it and the T table with 1million rows in it.

The @ID variable ended up being almost 7000 characters long.

HOWEVER... The FN_SPLIT and FN_TVF_SPLIT functions only accept a parameter of 2000 characters, so it ends up cutting off the @ID variable at 2000 chars and only creating a split table of about 300 entries instead of 1000.

Please confirm... Did the functions you used in your testing accept a NVARCHAR(2000) or a NVARCHAR(MAX)?

--Brad

Brad good catch. I think that you are right. I did use nvarchar(2000). I forgot to make the change to accomodate longer strings. I will redo my tests on 1000 ids and update the post.

Thanks.

One more thing...

In your FN_TVF_Split function, your WHERE clause has the predicate: AND N<=1000

That also limited the virtual numbers table to values from 1 to 1000.

When you fix the parameter declarations and take out that N<=1000 predicate, the Reads of all the tests come out over 3000... in other words, more comparable.

I get 3220 for the two XML methods, 3379 for the FN_Split, and 3007 for the FN_TVF_Split.

--Brad

I saw that too Brad. I have no idea how that one snuck in there. I will be updating the results as soon as I can.

Sorry guys!!!

I believe you have also heard about the way of sql server 2005 mdf recovery

Thanks for the info! You may turn your attention on outsource Net development.

I have a similar situation. I start out with a table that has data input into a column from another source. This data is comma delimited coming in. I need to manipulate the data to remove a section at the end of each. So I split the data and remove the end with the code below. (I added the ID column later to be able to sort. I also added WITH SCHEMABINDING later to add an XML index but nothing works. I can remove this ... and the ID column, but I do not see any difference one way or the other):

ALTER VIEW [dbo].[vw_Routing]

WITH SCHEMABINDING

AS

SELECT TOP 99.9999 PERCENT

ROW_NUMBER() OVER (ORDER BY CableID)-1 as ID,

CableID AS [CableID],

SUBSTRING(m.n.value('.[1]','varchar(8000)'),1,13) AS Routing

FROM

(

SELECT CableID,CAST('' + REPLACE([RouteNodeList],',','') + '' AS XML) AS x

FROM [dbo].[Cables]

)t

CROSS APPLY x.nodes('/XMLRoot/RowData')m(n)

ORDER BY ID

Now I need to Concatenate data from the "Routing" column's rows into one row grouped by another column into a column again. I have the code working except that it is reordering my data; I must have the data in the order it is input into the table as it is Cable Routing information. I must also remove duplicates. I use the following code. The SELECT DISTINCT removes the duplicates, but reorders the data. The SELECT (without DISTINCT) keeps the correct data order, but does NOT remove the duplicates:

Substring(

(

SELECT DISTINCT ','+ x3.Routing AS [text()] --This DISTINCT reorders the routes once concatenated.

--SELECT ','+ x3.Routing AS [text()] --This without the DISTINCT does not remove duplicates.

From vw_Routing x3

Where x3.CableID = c.CableId

For XML PATH ('')

), 2, 1000) [Routing],

I tried the code you gave above and it provided the same results with the DISTINCT reordering the data but without DISTINCT not removing the duplicates.

PLEASE HELP!!!

Thanks,

Sherry S.

Post a Comment